For the first time in the history of enterprise technology, the people using the technology know more about its potential than the people buying it.

Let that sink in for a moment. Because it inverts everything we know about organizational change management - and it's why your traditional approach to building a Centre of Excellence will fail when it comes to AI.

The ChatGPT Moment

Dr. Debbie Qaqish, in her white paper on AI Centres of Excellence (2024), captures this perfectly. She describes watching every major tech evolution of the past four decades - from rotary phones to smartphones, from dial-up internet to cloud computing, from on-premise servers to SaaS platforms. Nothing, she says, was as earth-shaking as the release of ChatGPT on November 30, 2022.

Why? Because every previous technology came with a predictable evolution path. You could see where it was going. You could plan for it. You could reasonably accurately define use cases upfront and execute against them.

AI shatters that predictability. We are in unknown territory. And that changes everything about how organisations must adapt.

How We've Always Done Tech Implementation

Let me show you what I mean with a concrete example.

Think about a CRM rollout in the 2010s - let's say Salesforce:

- Leadership identifies the problem: "Our sales pipeline visibility is terrible; deals are falling through cracks"

- Leadership selects the solution: They evaluate vendors and choose Salesforce

- Leadership defines the use cases: Lead tracking, opportunity management, forecasting reports - all documented upfront in requirements

- Workers execute the plan: Sales reps get trained on defined fields, follow mandatory processes, use standardized reports

- Knowledge flows DOWN: "Here's how you'll use it, here's the dashboard you'll look at, here's the fields you'll fill in"

The Centre of Excellence's role in this world? Implementation, training, and optimisation of those predetermined use cases.

This model worked beautifully for decades. The technology was stable. The use cases were knowable. The path was clear.

Enter AI - And Everything Breaks

Now let me show you what's actually happening with AI in organisations today.

I recently worked with a European Customer Support team on AI integration. Here's what we discovered:

Support agents started using AI to draft responses. Nothing revolutionary there - that was the planned use case. But then something interesting happened. Agents began noticing that the AI was identifying sentiment patterns they had never formally tracked. One agent said, "Wait - this AI noticed that customers who use certain phrases are actually asking about X, not Y."

Then they discovered the AI could predict escalation risk based on subtle language cues that nobody had ever documented. These weren't use cases we planned for. These were discoveries made by front-line workers experimenting with the technology.

The knowledge didn't flow down. It flowed up.

The AI CoE's role became capturing these emergent insights and scaling them across teams. Not training people on predetermined workflows but harvesting what workers discovered about AI's capabilities.

The Tacit Knowledge Goldmine

But here's where it gets really interesting - where AI and knowledge management converge in a way that's never been possible before.

Consider financial advisors. I recently delivered a customised program for an Insurance client, working with their team of several advisors nationwide. These senior advisors hold extraordinary tacit knowledge - the kind that traditional technology could never capture:

Pattern Recognition: "I can tell from a conversation if someone's underinsured." That's not in any manual. That's 20 years of experience reading between the lines.

Client Psychology: "How to explain complex coverage in simple terms. When to push and when to back off. How to have difficult conversations about underinsurance." No CRM workflow can teach this. It's intuitive, contextual emotional intelligence built over thousands of client interactions.

Local/Regional Expertise: Understanding flood zones, weather patterns, crime rates, local business ecosystems, community relationships and networks. This is place-based tacit knowledge that exists in advisors' heads, not in databases.

Claims Wisdom: How to guide clients through claims processes, what to document at the scene, how to advocate for clients with claims teams. Real-world responses to "that's too expensive." How to explain the value of coverage.

Creative Problem-Solving: Which products naturally go together, how to package solutions for different life stages, creative solutions for unique client situations. Each client is different. Senior advisors have a mental library of "I once had a client who..." scenarios that saved the day.

Underwriting Judgment: When to escalate a risk versus handle it, how to present a borderline risk to underwriters, what information underwriters really need.

The traditional tech approach would have built workflows for standard cases, created dropdown menus for common scenarios, documented "best practices" in a manual nobody reads - and missed 80% of the actual value in those advisors' heads.

But here's what we discovered with AI:

When advisors start experimenting with AI in Communities of Practice, something remarkable can happen. The AI could help them articulate their tacit knowledge. One veteran advisor would be able to say: "The AI just explained the pattern I've been following unconsciously for 15 years. I never knew how to teach this to newer advisors, but now I can see it."

AI becomes the externalisation engine - converting "I just know" into "Here's why I know."

And the AI CoE's role in this brave new world? Systematically capturing these discoveries flowing UP from practitioners and scaling them across all the many advisors.

This Is Pure SECI in Action

If you're familiar with knowledge management theory, you'll recognize Nonaka's SECI model at work:

- Socialisation: Practitioners in Communities of Practice sharing "hey, I tried this with AI and it worked"

- Externalisation: The CoE capturing those tacit discoveries and converting them into documented use cases

- Combination: The CoE synthesising patterns across experiments into frameworks and best practices

- Internalisation: Organisation-wide learning and capability building

The AI Centre of Excellence becomes the knowledge conversion engine - transforming frontline tacit knowledge about AI's emergent capabilities into organisational strategic advantage.

This has never been possible before. Traditional technology couldn't access tacit knowledge. It could only automate explicit processes. AI can help surface, articulate, and scale what people know but couldn't explain.

Why AI CoEs Are Completely Different

Dr. Qaqish identifies three key differences that make AI Centres of Excellence unlike any CoE you've built before:

1. Continuous big changes vs. step-chain improvement

Traditional tech followed a "pilot, test, deploy, optimise" model. You implemented once, then made incremental improvements. AI doesn't work that way. It requires ongoing adaptation to rapid, sometimes disruptive changes. Your CoE isn't optimising a stable platform - it's managing continuous experimentation and change.

2. Bottom-up vs. top-down

This is the game-changer. Because nobody can predict AI's evolution, initiatives must come from hands-on users experimenting and learning, not from leadership defining use cases upfront. The insights flow up from practitioners, not down from executives.

This inverts traditional change management. Your workers know more about AI's potential applications than your leadership does. The CoE's job is to harvest that knowledge and convert it into organisational capability.

3. Requires more leadership, resourcing, and budget

Unlike other technology CoEs that could operate as "nice to have" side projects staffed by people in their free time, the AI CoE needs dedicated time, real budget, executive clout, new incentives, and structured support.

Why? Because this isn't about implementing a predetermined solution. It's about creating an organisational learning system that can adapt at the speed of AI evolution.

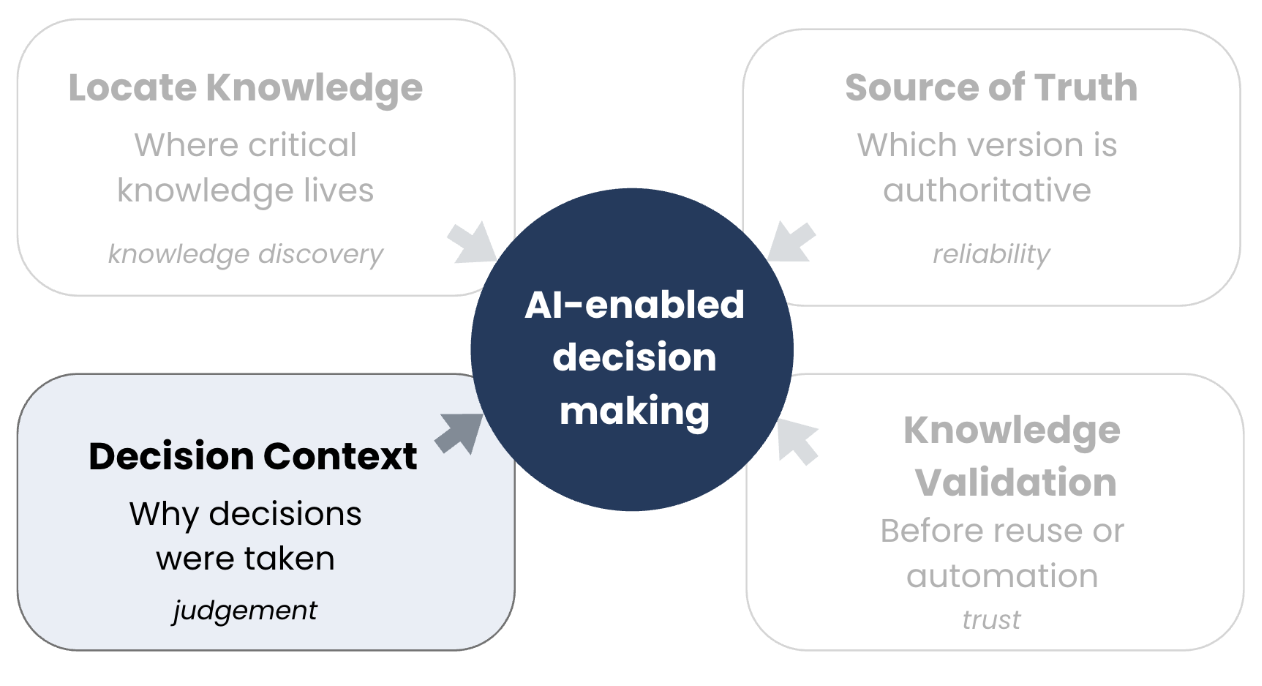

The Two Functions Your AI CoE Must Integrate

Some frameworks separate the AI Council (governance, risk, compliance) from the AI Centre of Excellence (innovation, experimentation, capability building). I've found this creates unnecessary friction and slows everything down.

Your AI CoE needs to integrate both functions:

Governance Function: Policy development, risk assessment, ethical frameworks, compliance. The "don't screw up" guardrails.

Innovation Function: Managed experimentation, capability building, training, best practices. The "make cool stuff happen" engine.

Why keep them together? Because effective experimentation requires governance guardrails. You can't separate "try new things" from "do it safely" without creating either chaos or paralysis. One integrated team moves faster than two teams coordinating.

What This Means For Your Organization

The implications are profound:

Traditional tech CoE role: Train people to use the platform as designed.

AI CoE role: Harvest what people discover about AI's capabilities and convert it into strategic advantage

Traditional knowledge flow: Leadership → "Here's the system" → Workers use it

AI knowledge flow: Workers → "Here's what we discovered" → CoE → Organisational transformation

Traditional CoE success metric: Adoption rates, process compliance, efficiency gains

AI CoE success metric: Rate of knowledge capture, speed of capability scaling, tacit knowledge externalisation

Companies that treat their AI CoE like a traditional implementation team will lose to companies that treat it like a knowledge creation system.

Getting Started

If you're building or reimagining your AI Centre of Excellence, here's where to focus:

1. Establish Communities of Practice - Create structured spaces for hands-on workers to experiment and share discoveries. This is your knowledge generation engine.

2. Build knowledge capture systems - Don't just let experiments happen. Systematically document what's being learned, especially tacit knowledge that AI helps surface.

3. Ensure executive clout - Your CoE leader needs power to move quickly on discoveries. When front-line workers find a game-changing application, you need to scale it fast.

4. Resource it properly - This isn't a side project. People need dedicated time to experiment, reflect, and collaborate. Budget for tools, training, and incentives.

5. Integrate governance and innovation - Don't separate them. Build one CoE that can experiment safely and scale learnings responsibly.

The Bottom Line

For the first time in enterprise technology history, the knowledge about what's possible flows from the bottom up, not the top down. Your front-line workers, experimenting with AI in their daily work, are discovering capabilities and applications that leadership couldn't have predicted.

The AI Centre of Excellence isn't about deploying technology. It's about harvesting tacit knowledge, converting discoveries into capabilities, and building organisational learning systems that can adapt at the speed of AI evolution.

This is where AI and knowledge management meet. And it changes everything about how we think about Centres of Excellence.

The question isn't whether to build an AI CoE. The question is: Are you building a traditional implementation team or a knowledge conversion engine?

Because only one of those will succeed in the AI era.

______________________________________________________

.png)

.png)

.png)

.png)